-

Demystify and eliminate hitches in the render phase

When you implement complex view hierarchies in your app, you may run into animation hitches. Demystify how your views are turned into pixels during the render phase, and learn how to use Instruments to uncover issues in this part of the render loop. Discover how to eliminate offscreen passes and leverage Xcode optimization opportunities in order to provide a great experience when using your app.

Resources

Related Videos

WWDC23

Tech Talks

-

Search this video…

Hi. I'm Patrick from the OS performance team at Apple. Today we're gonna dive into demystifying and eliminating render hitches in your apps.

iOS uses the Render Loop to display your views, and hitch is anytime the Render Loop does not complete a frame in time for display.

For an overview of the entire Render Loop, check out my talk "Explore UI Animation Hitches and The Render Loop." Here we'll focus on render hitches, which are hitches caused by slowness in the render prepare and render execute phases.

First, we'll look at what are these two render phases, then see how to catch and triage render hitches in our app using Instruments and the Xcode view debugger. Lastly, we'll look at some recommendations to optimize our layer tree and stop hitches from interrupting our user experience.

Let's start by defining the render phases.

During the commit phase, apps modify their UI and submit updated UI layer trees for processing. We call these submissions "commits," and the render server is responsible for rendering commits for all foreground processes. If the work in the render server takes longer than one frame duration, it can hitch. Although the work happens outside the app's process, the rendering work is done on your app's behalf, so you are responsible for how long it takes to render your app's layer tree.

The render server has two phases: render prepare and render execute.

The render prepare phase is where our layer tree is compiled down into a pipeline of simple operations for the GPU to execute. Animations which take place over a couple of frames are also handled here. During the render execute phase, the GPU draws the app's layers into a final image ready to be displayed. Either of these phases could delay the frame's delivery time.

To help understand these concepts, let's walk through an example render.

We will walk through the rendering of this frame. Notice that the shadow is around both the circle and the bar. This will become important later. We start with the layer tree that the app submitted to the render server on the left. The render server will step layer by layer to compile a pipeline of drawing commands that allows the GPU to draw the UI from back to front.

Starting at the root node, the render server walks from sibling to sibling and parent to child until it has every layer in the hierarchy.

Finally, it has the entire pipeline that the GPU can execute during the next execute phase. The GPU's job is to take this pipeline and draw each step into the final texture in the center. It's this texture that will be displayed on screen during the display phase. Starting with the first blue layer, it draws the color in the specified bounds. Next, the darker blue is drawn into its bounds, and we move on to the next layer.

But now, the GPU must draw the shadow. The shadow shape is defined by the next two layers, so the GPU does not know what shape to draw the shadow with. If we drew the circle and bar first, though, then the shadow would occlude them with black and it would look incorrect. That means the GPU has hit a roadblock, and to continue, it must switch to a different texture to figure out the shadow's shape. We call this "offscreen rendering" because we are drawing somewhere other than the final texture. From here, it can draw the circle and the bar. And now it has the shadow shape isolated in the offscreen texture. It has all it needs to make the shadow shape by first making the layers black and then blurring it.

It can then copy that offscreen texture into the final texture, and the shadow layer has been completed.

The next step is to draw the circle and then the rectangle again.

It will finish by copying the image of the text that the app drew on top.

We have now completed both render phases, and the frame is ready to be displayed. But we did have to do a special trick to render the shadow, which caused our rendering to take longer. This is called an offscreen pass.

An offscreen pass is anytime the GPU must render a layer by first rendering it somewhere else and then copying it over. With the shadow, it had to draw the layers to figure out the final shape. Offscreen passes can add up and cause the rendering to hitch so it's important to monitor and try to avoid them in your app.

There are four main types of offscreen passes that can be optimized: shadows, masks, rounded rectangles and visual effects.

We saw an example of a shadow offscreen in the example render. In this case, the renderer does not have enough information to draw the shadow without drawing the layer it is attached to first.

The second type of offscreen is when a layer or tree of layers requires masking. The renderer needs to render the masked subtree. But it also needs to avoid overwriting the pixels outside the masked shape. So it will render the entire subtree offscreen before copying only the pixels within the masked shape back to the final texture. This offscreen can lead to rendering many pixels that the user is never going to see.

The third type is related to masking. Rounding the corners of layers can sometimes require an offscreen. If not given enough information, the renderer may have to draw the entire view offscreen and then copy the pixels inside the rounded shape back.

The fourth type is from visual effect views. UI Kit provides two visual effect types: vibrancy and blurring. To apply these effects, the renderer must copy what's beneath the visual effect view to another texture with an offscreen pass. Then it applies the visual effect types to the result and copies it back. You will see this in UI navigation bars, UI tab bars and many other standard controls, as it's very common across iOS, tvOS and macOS.

So these four offscreen types can slow down the rendering and cause render hitches.

Now that we've described the details of the render phase and seen how a high number of offscreen passes can cause them, let's move to our second topic: finding hitches with Instruments.

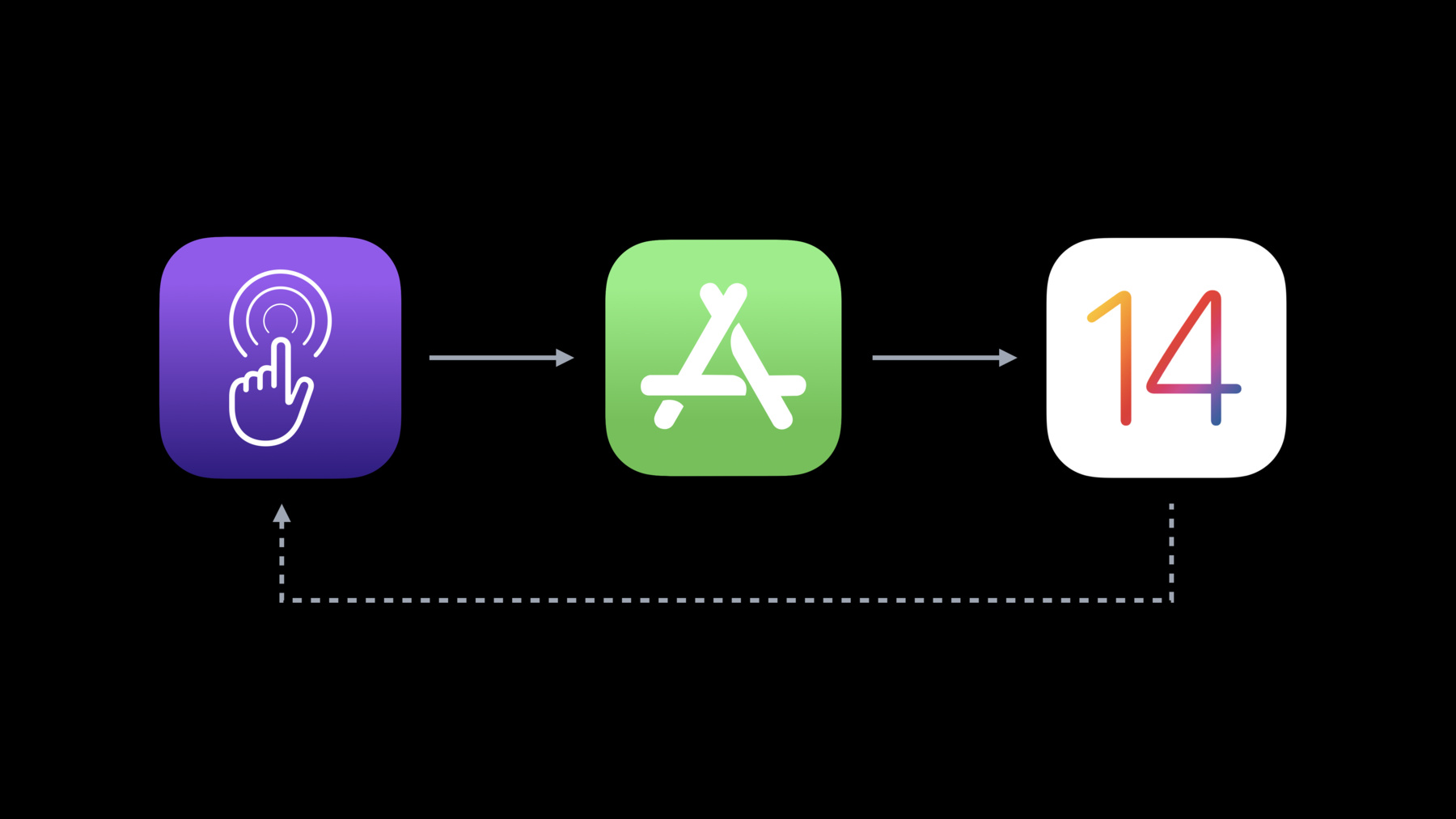

In Instruments 12, we released a new Instrument template to profile hitches in your apps.

Some users have complained about hitches in the Meal Planner app, and I'd like to investigate, so I begin in Instrument and start scrolling in the app.

And here is the trace of the Meal Planner app, using the animation hitches template. I'm curious to dive into some of the hitches I saw while scrolling.

Let's zoom in and expand the Hitches track to find hitch 16.

Each track corresponds to a stage of the Render Loop we talked about earlier.

Along the top is the most important track. It shows hitch intervals. This is the amount of time since the frame should have been ready.

The User Events track shows the user events associated with the hitching frame.

The Commits track shows all commit phases sent to the render server during that frame. Make sure to watch "Find and Fix Hitches in the Commit Phase" for more on these tracks specifically.

And here's what we've been focused on in this video. The Renders and GPU tracks show the work performed by the render server.

The Frame Lifetimes track shows the entire duration that it took to compose the frame from event to display.

And finally, the Built-In Display track shows all the frames that appeared on display along with the VSYNCs that happened along the way.

You can compare the frame lifetime with the beginning of the hitch duration to visualize the expected interval that the frame should have been done composing. In this hitch, we were two frames over, and from following the VSYNCs, we can see that both the commit and the render phases went over time.

This interval is called the acceptable latency, and all time after that is the hitch duration.

Below the tracks, we see the detailed metrics of the hitches when the Hitches track is selected. We were looking at hitch 16. We can see the hitch time and the acceptable latency. This is how long we had to complete the frame.

The buffer count is the number of buffers used by the render server at the time of the hitch. The default value is two, but it can be three when a rendering frame has been delayed and the render server is trying to catch up. In double buffered mode, we have two frames, or 33.34 milliseconds on an iPhone, which is what we see in the latency column. Always remember to follow the Hitch Duration track above, which will always highlight the area of interest, no matter the buffer count.

Lastly, there's the hitch type. The hitch type surfaces the types of hitches and helps give you context on what to dive into in your app. Here, we see we have both expensive commits and expensive GPU time, which is what we saw in the tracks above. Expanding out, we can focus on the Render and GPU tracks and select them to see an analyzer with more information on the prepare and execute phases.

A crucial column is the render count, where we can see the number of offscreen passes that the GPU had to make. Because we know we had a render hitch, we need to look at these offscreens and understand what is causing them and how we could fix them. The best way to look at our layer tree is using the Xcode view debunker. So for that, let's go to a demo.

Here we are in the view debugger with our Meal Planner app paused. On the left, we see our view controllers, windows, constraints and views. But starting with Xcode 11.2, we can also show layers. If we click on Editor, let's click on the new Show Layers item.

Cool. Now on the left in the navigator we can click on any view and see its layer and all of its sublayers.

When we select a layer, we are presented this brand-new layer inspector, which services useful properties of our layer. So we can see here that we have our tag views backing layer, and we can see the background color, opacity, whether we have enabled masksToBounds and lots of other properties. Importantly, we can see the offscreen count. This is the number of offscreens it took to render this layer. Below that are what we call offscreen flags. These describe the reason for the offscreen.

Now, a given flag, like offscreen mask, for example, can trigger multiple numbers of offscreens. For example, here we have two.

But if we were to dig through each layer in our entire app to check its offscreen count, we still would not have enough insight to reduce any of these offscreen passes.

To help identify and suggest performance optimizations in your view and layer hierarchy, we added a new runtime issue type in Xcode 12 we call optimization opportunities.

These are enabled by default, but you can find the option in the Editor menu under Show Optimization Opportunities.

These optimization opportunities are an incredible resource developed and written by Apple's performance teams after years of optimizing apps' rendering performance.

These are meant to suggest simple yet valuable changes that will not affect the overall look of your layers. In our navigator, we can see the purple runtime issue indicator on some layers.

Here we have our star layer. We see in the inspector that it takes five offscreens. Highlighting over the indicator reveals the cause is because of dynamic shadows. Let's go to the runtime issue navigator to see more details.

It's here that we can read the message for the issue. It says that the layer is using dynamic shadows, which are expensive to render. If possible, try setting shadowPath or pre-rendering the shadow into an image and putting it under the layer.

We discuss this type of offscreen in the slides. The renderer does not have enough information and needs to draw the layer offscreen to figure out the shadow's shape. By using the shadowPath property on the CALayer, we can actually give the renderer the exact steps to use and eliminate all five offscreens.

These really add up in our app, so let's take a look at the code and make this change.

Here we are with our star layer, where we set the shadow. The optimization text told us to set the shadowPath, which accepts any CGPath. Let's reuse the star path that we already created.

Just like that, we've eliminated five offscreen passes per CollectionViewCell. That's a big fix.

Now back in our debug navigator, we still see some other runtime issue indicators. On our tag view, we see a runtime issue on the layer. In the navigator, we see two offscreens caused by an offscreen mask. This layer is composed of an image view and a label view. We were worried about things escaping the red background, so we added a mask layer.

In the runtime tool tip, we see that the cause is simple background color masking.

In the issue navigator, we see the opportunity text. It says that this layer is using a simple layer with a background color set as a mask. Instead, use a container layer of the same frame and corner radius as the mask, both masksToBounds set to "Yes." The offscreen is caused by the renderer needing to render our mask layer first, and the suggestion is to eliminate this layer altogether. Let's check it out in code.

Here we have our tag view. We create a mask layer and give it a black background color and corner radius. This mask layer is simple, and any simple layer should never be a mask. Instead, we should just define this requested mask shape on the actual layer so the renderer can optimize the drawing.

We can instead set the corner radius to 10, like we wanted, and then set masksToBounds equal to "True." Then delete the mask altogether.

But that only eliminates one offscreen. Because we have sublayers, masksToBounds will need to do an offscreen pass to make sure the views are clipped correctly. But in our view, we've ensured that sublayers cannot exceed the bounds of our tag view. So in fact we actually do not need masking at all. Let's delete that masksToBounds call. And now we've eliminated both offscreens. Awesome. So far with three lines of code, we've saved seven offscreens per CollectionViewCell. This is a tremendous improvement, but we have one more issue to explore. Let's head back to the navigator.

Let's select our image view. We see that it has rounded corners, and when we highlight the runtime issue, we see it says, "simple shape masking." Once again, we will go to the runtime navigator to learn more.

It says that the layer is masked by a CAShapeLayer with a path that's a rect, a roundedRect or an ellipse. Instead, use an appropriately transformed container layer with cornerRadius and masksToBounds set.

The offscreen is caused by the renderer needing to mask to another layer again. But this time, it's not a simple layer. Let's look at the code to understand what's happening.

Here in the code, we see we have our image view. First, we create a CAShapeLayer and use a UIBezierPath API to create a path and then use that as the mask.

What's happening here is that we're using a shape layer, which can be a valid type of mask. But Xcode is able to detect that rather than a complex shape, we're just creating a rounded rectangle. The reason we wrote this code is because we were trying to create a special type of rounded rectangle sometimes called a squircle.

These type of rounded rectangles are very popular in iOS. But this is not the best way to get that effect. Starting in iOS 13, we can use the cornerCurve property to make the cornerRadius effect into a squircle shape. Now we can eliminate shapeLayer altogether and just set cornerRadius and cornerCurve to "continuous." Just like that, we've eliminated two more offscreens by just using the provided API. So we were able to really optimize our offscreen count and bring it from 36 down to zero. That's amazing.

Back in the view debugger, we see some other runtime issues that are not render related. Nonetheless we'd like to show them to our coworker Charles so he can try and optimize them. Before, this would require Charles having the same device as us and attaching to his app and pausing it in the view debugger.

But now we are able to save the state of our view debugger so that we can send it in an e-mail, share it with coworkers or even attach it to feedback reports. To do this, we can select File, Export View Hierarchy...

clicking "Save," and voilà. We now have a sendable file encapsulating all of our views, layers, constraints and runtime issues. This makes it much easier to collaborate remotely and will really help as you try tracking down all your unnecessary offscreens.

Now that we've seen how the Xcode view debugger can highlight offscreen issues in our app, let's recap some recommendations.

The most important thing to do in every app is to always use the provided APIs. When setting a shadow, make sure to set a shadowPath to save a large number of offscreen passes. When rounding a rectangle, use the cornerRadius and cornerCurve properties. Avoid using masks or corner contents to form rounded rectangle shapes. These cause unnecessary offscreens.

For most layers, creating a UIBezierPath by rounding the layer's bounds to its cornerRadius is all that's needed to set a good shadowPath.

The second step is to optimize masking across your app. Use masksToBounds to mask to a rectangle, rounded rectangle or ellipse. It's much more performant than custom mask layers. Overall, make sure masking is actually required. If content in the subtree will not exceed the bounds, then disable masksToBounds altogether.

These are only recommendations. It's important to profile your app using Instruments and use the optimization opportunities to inspect your layer tree for simple yet crucial tips to lowering your overall offscreen count. Lastly, remember that you can save the state of the view hierarchy to share with your team or even attach feedbacks in the feedback reporter app. Overall, these tools and the recommendations will help you avoid render hitches. For commit hitches, make sure to check out "Find and Fix Hitches in the Commit Phase." Together, these talks will help you reduce your app's hitch time ratio and keep your users scrolling smooth.

Thanks for watching.

-

-

0:01 - Shadow Path

// Setup shadow properties view.layer.shadowColor = UIColor.black.cgColor view.layer.shadowOpacity = 0.5 // Set a shadow path on a basic layer view.layer.shadowPath = UIBezierPath(roundedRect: view.layer.bounds, cornerRadius: view.layer.cornerRadius).cgPath

-