-

Add rich graphics to your SwiftUI app

Learn how you can bring your graphics to life with SwiftUI. We'll begin by working with safe areas, including the keyboard safe area, and learn how to design beautiful, edge-to-edge graphics that won't underlap the on-screen keyboard. We'll also explore the materials and vibrancy you can use in SwiftUI to create easily customizable backgrounds and controls, and go over graphics APIs like drawingGroup and the all new canvas. With these tools, it's simpler than ever to design fully interactive and interruptible animations and graphics in SwiftUI.

Resources

- Add rich graphics to your SwiftUI app

- Composing SwiftUI gestures

- Adding interactivity with gestures

- GestureState

Related Videos

WWDC21

-

Search this video…

Hi, I'm Jacob, and welcome to "Add Rich Graphics to Your SwiftUI App." I'm working on an app to build gradients with a few of my colleagues. This year, colors are the hot thing on our team. Most of the implementation is already done. Now I just need to finish it by adding some rich graphics. As we customize the app, we're going to see a few different areas: working with the safe area, including customizing it; new foreground style support; a rich new set of materials; and drawing with Canvas, a powerful new view. So let's get started. I'll show you what's in the app so far. We have a library of gradients, and I can view those gradients. There's something about this one I really like. I just can't put my finger on it. I can also edit a gradient... which lets me change the color stops.

And I can add a new gradient.

I can also use these gradients in some visualizers... But one step at a time. We'll look at those a bit later.

For now, let's focus on this gradient detail view. It's functional, but our actual content is pretty small relative to the chrome and empty space. I want the gradient to really take over for this screen. So let's start editing it in Xcode.

This is our main detail view, and it's used for our editing mode as well. Let's start with isEditing false, and we'll look at the editing mode later. Let's make our gradient use as much of the space as possible by deleting this frame.

And now that the gradient is taking up all of the height, we no longer need this spacer.

We can go even further by putting our controls on top of this gradient by changing this to a ZStack.

If you haven't seen a ZStack before, it lays elements out on top of each other instead of next to each other. Let's move our editing controls to the bottom.

And we only need padding on the controls, not on our gradient... so let's move this.

You may be wondering why there's still empty space at the top and bottom of the gradient, even after we've removed the padding. By default, SwiftUI positions your content within the safe area, avoiding anything that would obscure or clip your view, like the Home indicator or any bars that are being shown. The safe area is represented as a region that is inset from the outermost full area where a view is shown. Content in the safe area is automatically laid out within the appropriate insets to avoid those areas where it would be obscured. The safe area is also how SwiftUI helps you avoid drawing content under the keyboard. So in our app, our controls will automatically lift out of the way of the keyboard. This works the same way, and if we look more closely at how, it's because there are multiple different safe areas. The most common one is the container safe area, which is driven by the container a view is shown within and includes things like bars and device chrome. Additionally, there is a keyboard safe area for avoiding the keyboard. And note that the keyboard safe area is always a region within the container safe area. It keeps content safe from the same areas as the container safe area in addition to the keyboard. It's possible to opt out of safe areas. Usually you won't need to do this, since most content should be within the safe area so it isn't clipped. It is safe, after all. But ignoring the safe area can make sense for backgrounds or other content that you want to go fully edge to edge. You can use this code to opt out of all safe areas or specify the keyboard region to just opt out of the keyboard safe area. Let's add ignoresSafeArea to our linear gradient to get that full-bleed effect.

This Edit button isn't very visible on top of our gradient, so let's only ignore the safe area on the bottom edge.

Now, to make sure we don't run into the same problem with this bottom text being illegible from the gradient, let's add a background behind it.

We'll customize the background in a minute, but let's start with the most simple default, which gives us a white background that automatically changes in Dark Mode.

And this background also extends beyond the safe area automatically. This version of background and its behavior is new in iOS 15 and aligned OS releases. Let's look at how it works. Let's start with a container view and its safe area. Then we have our content view, which will just be within the safe area to keep it legible. If we naively added a background with the same bounds to the view it's applied to, we'd get this. But if we apply the ignoresSafeArea modifier to just the background view, then it will expand beyond the safe area while keeping the main content nice and safe. The new background modifier gives you this behavior automatically. Let's go back to our background and start customizing it. We can pass in a specific style, which could be a color or any other style, like a gradient.

It doesn't really make sense in this app, but let's look at something pastel colored.

I can also pass in a shape to clip this background to... For example, a rounded rectangle.

Notice that when I use a custom shape, the background no longer extends out of the safe area so that the shape matches your content's bounds. What I think would fit our app better is a blur for our background. We can use another new API to do that: Materials. Materials are a set of standard blur styles that you can apply. And let's make this background go back to taking up the whole area.

Materials are great for places where we want to show through colorful content like this. There's a set of different materials you can choose from, going from Ultra Thin to Ultra Thick. And all of these automatically show the right design on every platform.

I'm going to use a thin material here.

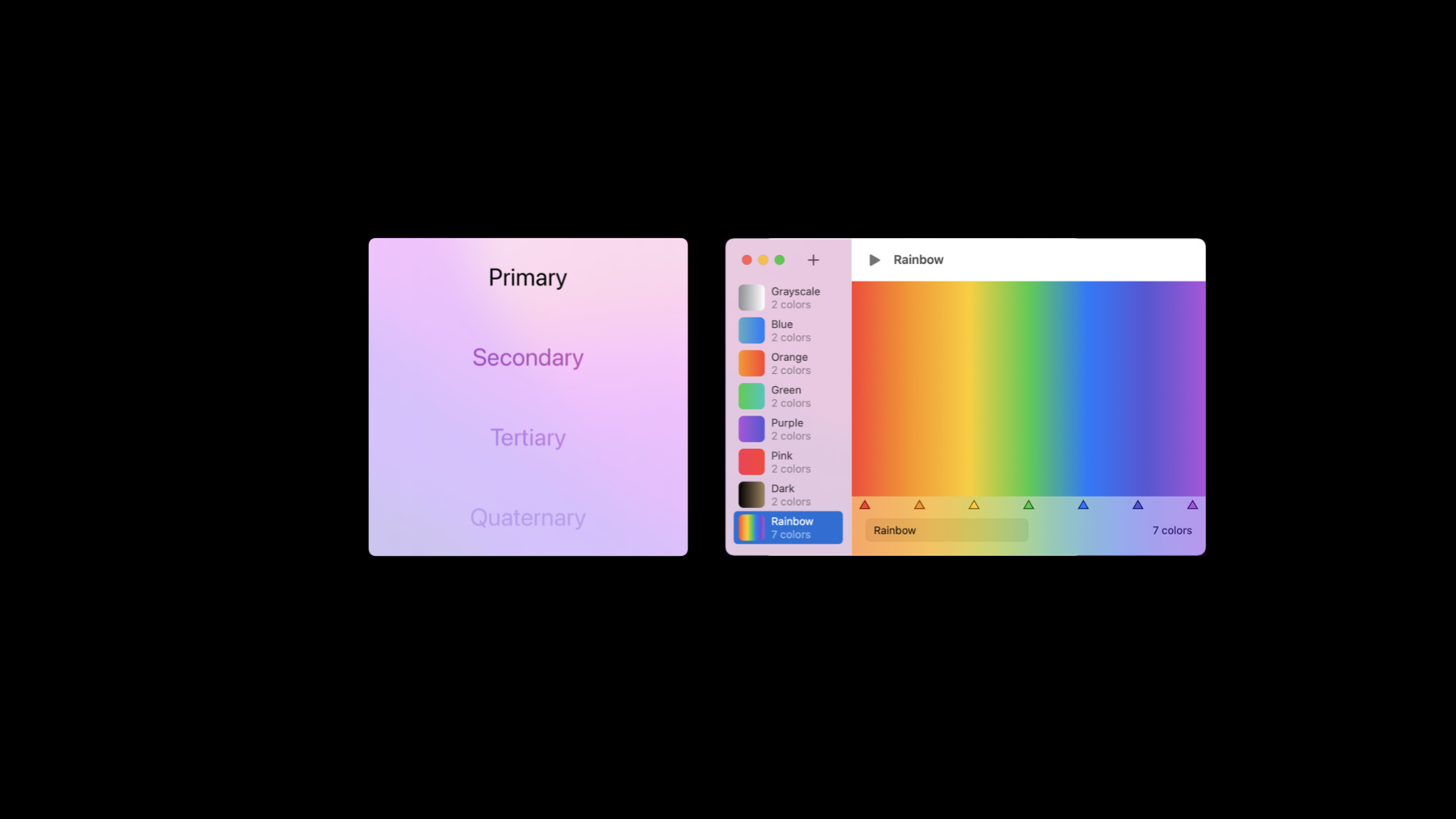

Next, I want to customize our text. Let's make the number of colors a little less prominent to show that the name is the primary information here.

I can do that by setting a foreground style of secondary.

You may have noticed that the secondary content is automatically shown with an effect called Vibrancy, which blends the colors behind it. In SwiftUI, there's no extra API for this effect. It happens automatically when you use the new Secondary through Quaternary styles in a material context. That can happen when you explicitly add a background with a material, like we just did, or when your content is in a system component, like a sidebar, that adds the material for you. And these styles have a lot of automatic smarts. They automatically do the right thing when used in a non-blurred context as well, where they don't use a vibrant effect. They also automatically change their behavior as you set a color on them, setting versions of the color for each level. And the same support works for setting any base foreground style, even things like gradients. Please use tastefully. One thing to note: Any given text can have a single foreground style applied to it, but multiple colors within its ranges. So for example, I could use string interpolation to embed an inner Text...

And then apply a foregroundColor of red... to the word "colors." And it will show that color, automatically opting out of vibrancy for that range.

Even more importantly, with these foreground styles, for the first time ever, you get the right behavior with embedded emoji, where they just work.

This is looking good. Let's run it again and try out Edit mode with these changes too.

It mostly works already. And these colors go under the blur, which is great. But if you look closely, it's not doing quite the right thing. When I scroll all the way down, there's a little bit of the list that's hidden behind the blur. Let's look more closely at what's happening. Let's take away the chrome and only look at the relevant views, here. If we slide these views apart a bit horizontally, we can see this is because the bar is just ZStacked on top of our content. Now that we want to see all of the view in the back, that's not the right behavior. We could change to a VStack here, but without the list under the blur, we wouldn't get any of the color showing through when scrolling down. We want the background of the list and its scrollable area to extend under the bar but not its main content. And this is exactly what the safe area is for. If we make the safe area get inset by this bar, then any important content will stay unobscured. To customize the safe area of our own views, we can use a new modifier: safeAreaInset. This lets us add auxiliary content, like our bar, over the main content. I'll replace our ZStack...

With a safeAreaInset...

Using an edge of.bottom...

And put our controls into that. Let's run it again to check.

This view still looks the same, which is good. That's because it's ignoring the safe area.

And in Edit mode...

We can still scroll under the bar to get that blur. But when we scroll to the bottom, nothing is obscured. Great. Next, let's look at our visualizers.

Let's start with the Shapes visualizer, which is already written.

It shows a large number of random shape symbols, each drawn with one of the gradients from the app. I can tap on a symbol to zoom it up...

Or tap in the background to reposition all of the symbols.

And if you've seen our SwiftUI animation demos before, you know that it's always interactive and interruptible. So I can keep rearranging...

And even tap to select and deselect shapes while that's happening.

If I go look at the code...

It's using a common technique for drawing graphics in SwiftUI. There's a GeometryReader so I can read the view's size to lay out all of these graphics and a ZStack to help me position them.

And at the end of the body, there's a modifier you may have seen before: drawingGroup. A drawingGroup tells SwiftUI to combine all of the views it contains in a single layer to draw. This works well for graphical elements like these but shouldn't be used with UI controls like text fields and lists. This is a great technique to use when you want to show a large number of graphical elements like we're doing here. And one of the benefits of drawingGroup is that even though these views are drawn differently, you can still use the same functionality from SwiftUI that you use everywhere else in your app. For example, here, we have a gesture applied to each symbol for tapping on them and also an animation that applies when we change selection or reposition them. The accessibility information contained in these views is also passed up normally-- for example, these accessibility actions on each symbol. However, to support all of these features, there is some bookkeeping and storage required for each view. If you have a high enough number of elements, then even that extra overhead might be too much. And for those cases, we've introduced a new Canvas view. Our next visualizer will show a complex particle system, and it isn't written yet. Let's go build it. Let's start with our Canvas view to draw it.

This lets us make a closure that's run every time the canvas is drawn and contains our drawing commands. If you're familiar with drawRect in UIKit or AppKit, this works pretty similarly. This closure gives us a context, which is what we send drawing commands to, and a size that we can use to get the size of the entire canvas. Let's start by just drawing an image. I can create one using the same Image type I use in the rest of my SwiftUI code.

And let's tell the context to draw our image.

When we draw it at 0,0, it's up here, centered at the origin, where it's not very visible. Since we have the size of the whole canvas available, let's use that to draw it in the middle instead.

And one thing you can see if I change our preview to be in Dark Mode...

Is that our image automatically flips to draw in white, since it's using the same foreground style that we saw earlier. Since we want to build a particle system, let's draw this image a few more times.

Note that this closure is for imperative code. It's not a ViewBuilder. So I can just use a normal for loop.

And let's shift each image a bit so we can actually see them.

Now, we're drawing this image several times, but each time, the context needs to resolve it to evaluate it based on things like the current environment, even though each time, it's the same image. We can improve this by resolving the image ourselves before drawing it.

Now we have better performance because we're sharing the same resolved image, but the resolved image also lets us do some other things.

We can now ask for its size and baseline. In our case, we'll use its size to shift each one by the right amount.

Next, let's add ellipses behind our sparkles. I'm going to draw them in the same region. So let's pull out a frame to draw them both in.

I'll create a CGRect with the same X and Y values and use our imageSize for the width and height...

Then draw our image in that frame.

Because each drawing operation is done in order, to put our ellipse behind the image, we need to draw it first. And we can draw it with context.fill...

Which takes a path and a shading. You can construct a path with standard bezier curves, but here's a tip: You can also use shapes like ellipse and ask them for their path in a given rectangle.

The other argument is a shading, which is what to fill our path with. And this can use the same styles as the rest of our SwiftUI app. Let's use a cyan color.

And there are the ellipses. There's not a lot of contrast with the images, though. Let's fix that. Graphics context has many standard drawing properties, like opacity, blend modes, transforms, and more. Let's set an opacity here. And we can look at an area where this context behaves a little differently from what you might be used to. If I just set an opacity on the context, then it behaves as you'd expect. It affects all operations that happen afterwards.

In the past, if I wanted to make a change to a graphics context only applied to some of my drawing operations, I would have to bracket those operations in a save and restore call. But with a SwiftUI context, all I have to do is make changes on a copy.

And those changes only affect drawing done with the modified context. Drawing done on the original context is unaffected.

Let's add some color to our image too. One other thing we can do with a resolved image is set a shading to control how symbols are drawn.

Let's set a blue color here.

That looks a little less bright than I was hoping for. Sometimes when you're drawing, the right blend mode can make a big difference. Blend modes control how colors are combined, especially with partial opacity, like we have here.

Let's set a screen blend mode. That combines colors so that they always get brighter. That's looks better. There are many more drawing operations you can do. Check out the GraphicsContext type to see everything that's possible. Now, to make this like a simulation, it needs to actually move. There are a few tools to make something change over time in SwiftUI. Animations are the most common, and they generally just happen automatically when you make a change. This year, we're introducing a new lower-level tool called TimelineView for when you want to control exactly how something changes over time. I can use a TimelineView by just wrapping it around the view that I want to change.

And I can configure it with a schedule, which tells it how often to update.

There are schedules for things like timers, but we're going to use an animation schedule to get updates as quickly as we can display them. If you're familiar with a display link, this works very similarly. And if you're not, that's totally OK. We get passed a timeline context that gives us information about what we should show.

I can pull out a time in seconds that we'll use to animate our images around.

Let's make our images move in a rotating oscillation. So I'll make an angle from the current time.

Let's have it loop every three seconds by using a remainder...

And multiply that by 120 to get 360 degrees.

And we'll get the X value with cosine. Or was it sine? I hope you remember your trigonometry.

Now let's use that value to change our offset...

And take our previews live to see how it looks.

Nice. See how when they overlap, they get even brighter? That's our screen blend mode at work. Next, let's add some interactivity. Earlier, we looked at some of the interactions we can do by adding gestures to individual views. Remember that one of the tradeoffs of using a canvas is that the individual elements within it are combined into a single drawing. So we couldn't, for example, attach a gesture to these individual images. However, we can still add a gesture to the entire view. Let's add the ability to increase the number of sparkles shown. We'll add some state for how many to show.

And let's have it start with two. Let's use the count to control our loop.

Then we'll add a TapGesture to increment the count.

Let's update our preview.

And now we can tap to add sparkles.

One other important aspect of using a canvas is that, since it's a single graphic, there's not any information about its contents available to Accessibility. To make this accessible, we'll use the standard accessibility modifiers to add information about our view.

And for more advanced cases, there's a powerful new .accessibilityChildren modifier that lets you specify an arbitrary SwiftUI view hierarchy to use to generate accessibility information about a view. See "SwiftUI Accessibility: Beyond the Basics" for more information on how to use that.

We've built up a relatively simple use of Canvas, but it's designed to support much more complex uses, so let's spice things up a bit. One of my colleagues wrote some simulation code for me that works the same way as what we have here, but with a lot more elements doing more interesting things. I have the file he sent me here that I'll just paste into our view.

This code has the same structure as what we were just doing. We now have a long-lived model object that we're updating over time that keeps track of all of our particles. We have the same TimelineView and Canvas to animate and draw our content. We're updating our model with the new date, setting that screen blend mode, and telling each active particle to draw itself the same way as the ellipse that we just had. And finally, we have the same modifiers applied, just with a slightly more complex gesture. So let's see what it looks like.

It creates a new fireworks burst periodically, and we can also tap to add more bursts. And they're made using ellipses with the colors from the app's gradients.

One other great thing about drawing in Canvas is that it also works on watchOS, tvOS, and macOS. It's available on all SwiftUI platforms. All right. We've finished our app. And along the way, we looked at working with and modifying safe areas, how to use foreground styles to control how content is drawn, how to use materials to get blurs and vibrancy, and we used Canvas and TimelineView to build complex animated graphics. I can't wait to see what amazing graphics you build in your apps. [music]

-

-

3:53 - Ignoring safe areas

// Ignore all safe areas ContentView() .ignoresSafeArea() // Ignore keyboard only ContentView() .ignoresSafeArea(.keyboard) -

7:08 - Foreground Styles

VStack { Text("Primary") .foregroundStyle(.primary) Text("Secondary") .foregroundStyle(.secondary) Text("Tertiary") .foregroundStyle(.tertiary) Text("Quaternary") .foregroundStyle(.quaternary) } -

7:35 - Purple Foreground Styles

VStack { Text("Primary") .foregroundStyle(.primary) Text("Secondary") .foregroundStyle(.secondary) Text("Tertiary") .foregroundStyle(.tertiary) Text("Quaternary") .foregroundStyle(.quaternary) } .foregroundStyle(.purple) -

7:41 - Blue Gradient Foreground Styles

let blueGradient = LinearGradient( colors: [.blue, .teal], startPoint: .leading, endPoint: .trailing) VStack { Text("Primary") .foregroundStyle(.primary) Text("Secondary") .foregroundStyle(.secondary) Text("Tertiary") .foregroundStyle(.tertiary) Text("Quaternary") .foregroundStyle(.quaternary) } .foregroundStyle(blueGradient)

-