-

Load resources faster with Metal 3

Discover how you can use fast resource streaming in Metal 3 to quickly load assets. We'll show you how to use an asynchronous set-it-and-forget-it workflow in your app to take advantage of the speed of SSD storage and the throughput of Apple silicon's unified memory architecture. We'll also explore how you can create separate queues that run parallel to — and synchronize with — your GPU render and compute work. Finally, we'll share how to designate assets like audio with high-priority queues to help you load data with lower latency.

Resources

Related Videos

WWDC22

-

Search this video…

♪ instrumental hip hop music ♪ ♪ Hi, my name is Jaideep Joshi, and I'm a GPU software engineer at Apple. In this session, I will introduce a new feature in Metal 3 that will simplify and optimize resource loading for your games and apps. I'll start by showing you how the fast resource loading feature can fit into your app's asset-loading pipeline. It has several key features that harness new storage technologies on Apple products. Fast resource loading has some advanced features that solve interesting scenarios your applications may run into. There are a few best practice recommendations you should know about that will help you effectively use these features in your apps. As you add fast resource loading to your apps, tools like Metal System Trace and the GPU debugger can help profile and fix issues you may run into. At the end, I'll walk through an example that shows fast resource loading in action. So here's what you can do with Metal 3's fast resource loading.

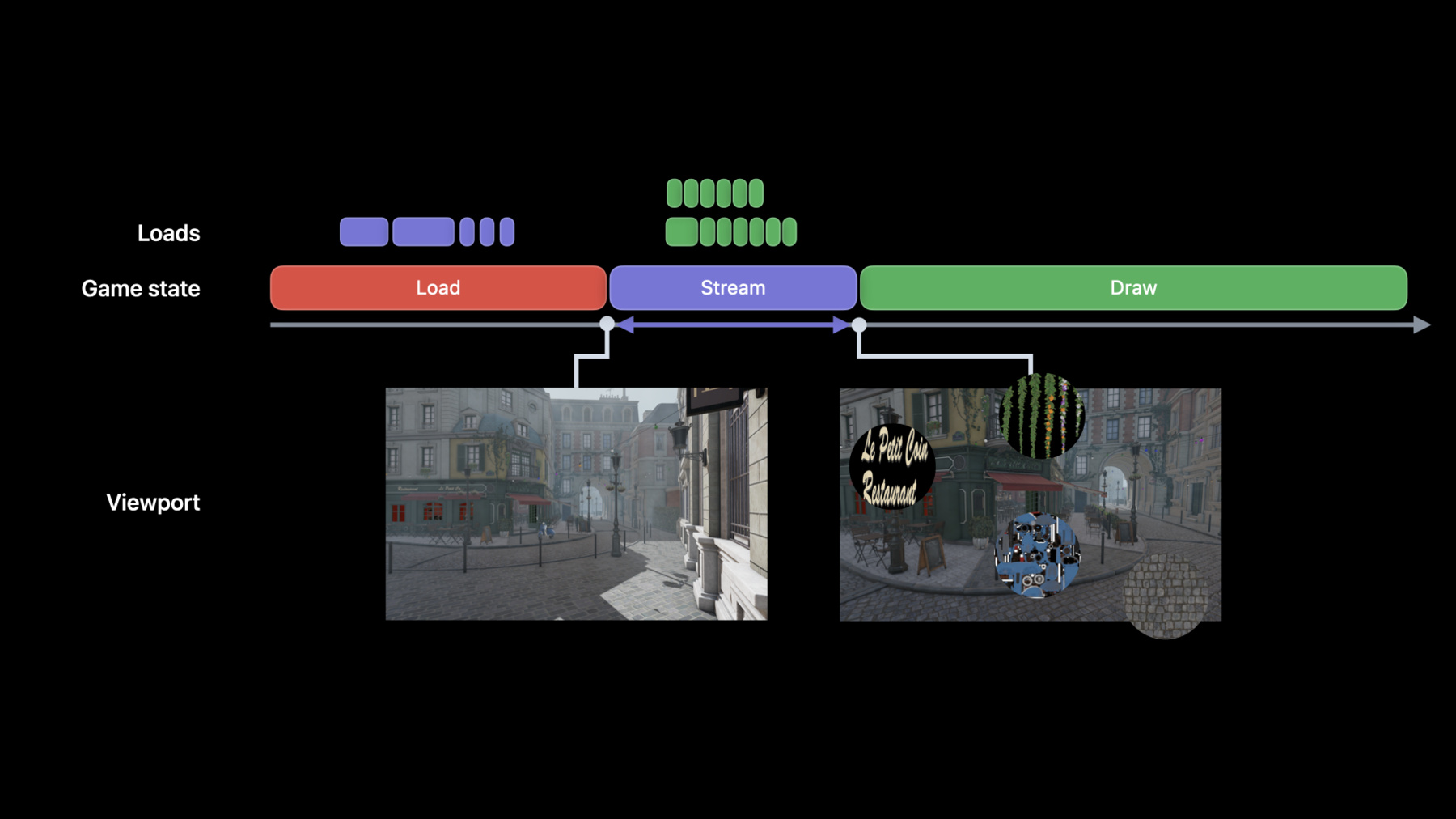

With Metal 3's fast resource loading, your games and apps can load assets with low latency and high throughput by taking advantage of the Apple silicon unified memory architecture and fast SSD storage included with Apple platforms. You will learn the best ways to stream data and reduce load times to ensure that your game's assets are ready on time. A key aspect of reducing load times is to load only what you need at the smallest possible granularity. The high throughput and low latency in Metal 3 lets your apps stream higher-quality assets, including textures, audio, and geometry data. Now I'll walk you through an example of asset loading in a game. Games typically show a loading screen when they first start up, or at the beginning of a new level, so they can load the game's assets into memory. As the player moves through the level, the game loads more assets for the scene. The downside is the player has to wait a long time while the game makes multiple requests to the storage system to load assets up front. Plus, those assets can have a large memory footprint. There are a few ways to improve this experience. Games can improve this experience by dynamically streaming objects as the player gets closer to them. This way, the game only loads what it needs at first and gradually streams other resources as the player moves through the level. For example, the game initially loads this chalkboard at a lower resolution, but as the player walks towards it, the game loads a higher-resolution version. This approach reduces the time the player waits at the loading screen. However, the player might still see lower-resolution items in the scene even when they are up close, because it takes too long to load the higher-resolution versions. One way to deal with this is to stream smaller portions of each asset. For example, your game could load only the visible regions of the scene with sparse textures that stream tiles instead of whole mip levels. This vastly reduces the amount of data your app needs to stream. With that approach, the load requests get smaller, and there are more of them. But that's OK, because modern storage hardware can run multiple load requests at once. This means that you can increase the resolution and scale of your scene without compromising the gameplay. Along with issuing a large number of small-load requests, you also have the ability to prioritize your load requests, to ensure that high-priority requests finish in time. Now that I have covered the ways to boost visual fidelity of games while reducing load times, I'll show you how Metal 3's fast resource loading helps you do this. Fast resource loading is an asynchronous API that loads resources from storage. Unlike existing load APIs, the thread which issues the loads does not need to wait for the loads to finish. The load operations execute concurrently to better utilize the throughput of faster storage. You can batch load operations to further minimize the overhead of resource loading. And finally, with Metal 3, you can prioritize load operations for lower latency. Now I'll show you the key features that will help you build your asset-loading pipeline, starting with the steps to load resources. There are three steps to load resources: open a file, issue the necessary load commands, and then synchronize these load commands with rendering work. Here's how you do that, starting with opening a file. You open an existing file by creating a file handle with a Metal device instance. For example, this code uses the Metal device instance to create a file handle by calling its new makeIOHandle method with a file path URL. Once you have a file handle, you can use it to issue load commands. Here's a typical scenario in an application, where it performs load operations and encodes GPU work. With existing load APIs, the app has to wait for the loading work to finish before it can encode the rendering work. Metal 3 lets your app asynchronously execute load commands. Start by creating a Metal IO command queue. Then use that queue to create IO command buffers and encode load commands to those buffers. However, as command buffers execute asynchronously on the command queue, your app does not need to wait for the load operations to finish. In fact, not only do all commands within an IO command buffer execute concurrently, IO command buffers themselves execute concurrently and complete out of order. This concurrent execution model better utilizes faster-storage hardware by maximizing throughput. You can encode three types of IO commands to a command buffer: loadTexture, which loads to a Metal texture for texture streaming; loadBuffer, which loads to a Metal buffer for streaming scene or geometry data; and loadBytes, which loads to CPU-accessible memory. You create IO command buffers from an IO command queue. To create a queue, first make and configure an IO command queue descriptor. By default, the queues are concurrent, but you can also set them to run command buffers sequentially and completely in order. Then pass the queue descriptor to the Metal device instance's makeIOCommandQueue method. Create an IO command buffer by calling the command queue's makeCommandBuffer method. Then use that command buffer to encode load commands that load textures and buffers. Metal's validation layer will catch encoding errors at runtime. The load commands are what use the fileHandle instance created earlier. When you are done adding load commands to a command buffer, submit it to the queue for execution by calling the command buffer's commit method. Now that I've covered how to create IO command queues, command buffers, issue load commands, and submit them to the queue, I want to show you how you can synchronize loading work with the other GPU work. An app typically kicks off its rendering work after it finishes loading resources for that rendering. But an app that uses fast resource loading needs a way to synchronize the IO command queue with the render command queue. You can synchronize these queues with a Metal shared event. Metal hared events let you synchronize the command buffers from your IO queue with the command buffers from your rendering queue. You can tell a command buffer to wait for a shared event by encoding a waitEvent command. Similarly, you can tell that command buffer to signal a shared event by encoding a signalEvent command. Metal ensures that all IO commands within the command buffer are complete before it signals the shared event. To synchronize between command buffers, you first need a Metal shared event. You can tell a command buffer to wait for a shared event by calling its waitForEvent method. Similarly, you can tell a command buffer to signal a shared event by calling its signalEvent method. You can add similar logic to a corresponding GPU command buffer so that it waits for the IO command buffer to signal the same shared event. To recap, here are the key features and APIs that load resources in your Metal apps. Open a file by creating a Metal file handle. Issue load commands by creating an IO command queue and an IO command buffer. Then, encode load commands to the command buffer for execution on the queue. And finally, use wait and signalEvent commands with Metal shared events to synchronize loading and rendering. Now, I'll go over a few advanced features that you might find helpful. Here's a typical scenario where a game can't fit its entire map in memory, which is why it subdivides the map into regions. As the player progresses through the map, the game starts preloading regions of the map. Based on the player's direction, the game determines that the best regions to preload are the northwest, west, and southwest regions. However, once the player moves to the western region and starts heading south, preloading the northwestern region is no longer beneficial. To reduce the latency of future loads, Metal 3 allows you to attempt to cancel load operation. Let's look at how to do that in practice. When the player is in the center region, encode and commit IO command buffers for three regions. Then when the player is in the western region and heading south, use the tryCancel method to cancel the loads for the northwestern region. Cancelling is at the command buffer granularity, so you can cancel the command buffer mid-execution. If at some later point, you want to know whether the region was completely loaded, you can check the status of the command buffer. Metal 3 also lets you prioritize your IO work. Consider a game scenario where the player teleports to a new part of the scene and your game starts streaming in large amounts of graphics assets. At the same time, the game needs to play the teleportation sound effect. Fast resource loading allows you to load all your app's assets, including audio data. To load audio, you can use the loadBytes command discussed earlier to load to application-allocated memory. In this example, texture and audio IO command buffers are concurrently executing on a single IO command queue. This simplified diagram shows the requests at the storage layer. The storage system is able to execute both audio and texture load requests in parallel. To avoid delayed audio, it is critical that the streaming system be able to prioritize audio requests over texture requests. To prioritize audio requests, you can create a separate IO command queue, and set its priority to high. The storage system will ensure that high-priority IO requests have a lower latency and are prioritized over other requests. After creating a separate high-priority queue for audio assets, the execution time of the audio load requests has gotten smaller, while that of the parallel texture load requests has gotten larger. Here's how you create a high-priority queue. Simply set the command queue descriptor's priority property to high. You can also set the priority to normal or low, then create a new IO command queue from the descriptor as usual. Just remember that you cannot change a queue's priority level after you create it. As you add fast resource loading to your apps, here's some best practices to keep in mind. First, consider compressing your assets. You can reduce your app's disk footprint by using built-in or custom compression. Compression lets you trade runtime performance for a smaller disk footprint. Additionally, you can improve storage throughput by tuning the sparse page size when using sparse textures. I'll go through each of these in more detail, starting with compression. You can use Metals 3's APIs to compress your asset files offline. First, create a compression context and configure it with a chunk size and compression method. Then pass parts of your asset files to the context to produce a single compressed version of all your files. The compression context works by chunking all the data and compresses it with the codec of your choosing and stores it to a pack file. In this example, the context compresses the data in 64K chunks, but you can choose a suitable chunk size based on the size and type of data you want to compress. Here's how you use the compression APIs in Metal 3. First, create a compression context by providing a path for creating the compressed file, a compression method, and a chunk size. Next, get file data and append it to the context. Here, the file data is in an NSData object. You can append data from different files by making multiple calls to append data. When you're done adding data, finalize and save the compressed file by calling the flush and destroy compression context function. You can open and access a compressed file by creating a file handle. This file handle is used when issuing load commands. For compressed files, Metal 3 performs inline decompression, by translating the offsets to a list of chunks it needs to decompress, and loads them to your resources. You create a file handle with a Metal device instance. For example, this code uses the Metal device instance to create a file handle by providing the compressed file path to the makeIOHandle method I covered earlier. For compressed files, an additional parameter is the compression method. This is the same compression method you used at the time of creating the compressed file. Now, I'll go through the different compression methods supported and the characteristics of each of them, so you can better understand how to choose between them. Use LZ4 when decompression speed is critical and your app can afford a large disk footprint. If a balance between codec speed and compression ratio is important to you, use ZLib, LZBitmap, or LZFSE. Amongst the balanced codecs, ZLib works better with non-Apple devices. LZBitmap is fast at encoding and decoding, and LZFSE has a high compression ratio. If you need the best compression ratio, consider using the LZMA codec, if your app can afford the extra time it takes to decode assets. It is also possible to use your own compression scheme. You may have cases where your data benefits from a custom compression codec. In that case, you can replace the compression context with your own compressor and translate offsets and perform decompression at runtime yourself. Now that you have seen how to use compression to reduce disk footprint, let's look at tuning sparse page size. Earlier versions of Metal support loading tiles to sparse textures at a 16K granularity. With Metal 3, you can specify two new sparse tile sizes: 64 and 256K. These new sizes let you to stream textures at a larger granularity to better utilize and saturate the storage hardware. Note that there is a tradeoff between streaming larger tiles sizes and the amount of data you stream, so you'll have to experiment to see which sizes work best with your app and its sparse textures. Next, let's look at how you can use the set of Metal Developer Tools to profile and debug fast resource loading in your app. Xcode 14 includes full support for fast resource loading. From runtime profiling with Metal System Trace to API inspection and advanced dependency analysis with Metal debugger.

Let's start with runtime profiling. In Xcode 14, Instruments can profile fast resource loading with the Metal System Trace template. Instruments is a powerful analysis and profiling tool that will help you achieve the best performance in your Metal app. The Metal System Trace template allows you to check when load operations are encoded and executed. You will be able to understand how they correlate with the activity that your app is performing on both the CPU and the GPU. To learn how to profile your Metal app with Instruments, please check out these previous sessions, "Optimize Metal apps and games with GPU counters" and "Optimize high-end games for Apple GPUs." Now, let's switch gears to debugging. With the Metal debugger in Xcode 14, you can now analyze your game's use of the new fast resource loading API. Once you take a frame capture, you will be able to inspect all fast resource loading API calls. From the IO command buffers created to the load operations that were issued. You can now visually inspect fast resource loading dependencies with the new Dependency viewer. The Dependency viewer gives a detailed overview of resource dependencies between IO command buffers and Metal passes. From here, you can use all the features in the new Dependency viewer, such as the new synchronization edges and graph filtering, to deep dive and optimize your resource loading dependencies. To learn more about the new Dependency viewer in Xcode 14, please check out this year's "Go bindless with Metal 3" session. Now, let's look at fast resource loading in action. This is a test scene that uses the new fast resource loading APIs to stream texture data by using sparse textures with a tile size of 16 kilobytes. This video is from a MacBook Pro with an M1 Pro chip. The streaming system queries the GPU's sparse texture access counters to identify two things: the tiles it has sampled but not loaded and the loaded tiles the app isn't using. The app uses this information to encode a list of loads for the tiles it needs and a list of evictions for tiles it doesn't need. That way, the working set contains only the tiles the app is mostly likely to use. If the player decides to travel to another part of the scene, the app needs to stream in a completely new set of high-resolution textures. If the streaming system is fast enough, the player will not notice this streaming occurring. If I pause the scene, you can observe the image differences more clearly. The left side is loading sparse tiles on a single thread using the pread API. The right side is loading sparse tiles using the fast resource loading APIs. As the player enters the scene, most of the textures haven't fully loaded. Once the loads complete, the final high-resolution version of the textures is visible. If I go back to the beginning of this scene and slow it down, it is easier to notice the improvements that fast resource loading provides. To highlight the differences, this rendering marks tiles the app has not yet loaded with a red tint. At first, the scene shows that the app hasn't loaded most of the tiles. However, as the player enters the scene, fast resource loading improves the loading of high-resolution tiles and minimizes the delay compared to the single-threaded pread version. Metal 3's fast resource loading helps you build a powerful and efficient asset-streaming system that lets your app take advantage of the latest storage technologies. Use it to reduce load times by streaming assets just in time, including higher-quality images. Use Metal's shared events to asynchronously load assets while the GPU renders a scene. For assets that your app needs in a hurry, minimize latency by creating a command queue with a higher priority. And remember, keep the storage system busy by sending load commands early. You can always cancel the ones you don't need. Fast resource loading in Metal 3 introduces new ways to harness the power of modern storage hardware for high-throughput asset loading. I can't wait to see how you use these features to improve your app's visual quality and responsiveness. Thanks for watching. ♪

-

-

5:19 - Create a handle to an existing file

// Create an Metal File IO Handle // Create handle to an existing file var fileIOHandle: MTLIOFileHandle! do { try fileHandle = device.makeIOHandle(url: filePath) } catch { print(error) } -

6:49 - Create a Metal IO command queue

// Create a Metal IO command queue let commandQueueDescriptor = MTLIOCommandQueueDescriptor() commandQueueDescriptor.type = MTLIOCommandQueueType.concurrent // or serial var ioCommandQueue: MTLIOCommandQueue! do { try ioCommandQueue = device.makeIOCommandQueue(descriptor: commandQueueDescriptor) } catch { print(error) } -

7:17 - Create and submit a Metal IO command buffer

// Create Metal IO Command Buffer let ioCommandBuffer = ioCommandQueue.makeCommandBuffer() // Encode load commands // Encode load texture and load buffer commands ioCommandBuffer.load(texture, slice: 0, level: 0, size: size, sourceBytesPerRow:bytesPerRow, sourceBytesPerImage: bytesPerImage, destinationOrigin: destOrigin, sourceHandle: fileHandle, sourceHandleOffset: 0) ioCommandBuffer.load(buffer, offset: 0, size: size, sourceHandle: fileHandle, sourceHandleOffset: 0) // Commit command buffer for execution ioCommandBuffer.commit() -

8:51 - Synchronize loading and rendering with Metal shared events

var sharedEvent: MTLSharedEvent! sharedEvent = device.makeSharedEvent() // Create Metal IO command buffer let ioCommandBuffer = ioCommandQueue.makeCommandBuffer() ioCommandBuffer.waitForEvent(sharedEvent, value: waitVal) // Encode load commands ioCommandBuffer.signalEvent(sharedEvent, value: signalVal) ioCommandBuffer.commit() // Graphics work waits for the IO command buffer to signal -

10:29 - TryCancel Metal IO command buffer

// Player in the center region // Encode loads for the North-West region ioCommandBufferNW.commit() // Encode loads for the West region ioCommandBufferW.commit() // Encode loads for the South-West region ioCommandBufferSW.commit() // Player in the western region and heading south // tryCancel NW command buffer ioCommandBufferNW.tryCancel() // .. // .. func regionNWCancelled() -> Bool { return ioCommandBufferNW.status == MTLIOStatus.cancelled } -

12:28 - Create a High Priority Metal IO command queue

// Create a Metal IO command queue let commandQueueDescriptor = MTLIOCommandQueueDescriptor() commandQueueDescriptor.type = MTLIOCommandQueueType.concurrent // or serial // Set Metal IO command queue Priority commandQueueDescriptor.priority = MTLIOPriority.high // or normal or low var ioCommandQueue: MTLIOCommandQueue! do { try ioCommandQueue = device.makeIOCommandQueue(descriptor: commandQueueDescriptor) } catch { print(error) } -

14:04 - Create a compressed file

// Create a compressed file // Create compression context let chunkSize = 64 * 1024 let compressionMethod = MTLIOCompressionMethod.zlib let compressionContext = MTLIOCreateCompressionContext(compressedFilePath, compressionMethod, chunkSize) // Append uncompressed file data to the compression context // Get uncompressed file data MTLIOCompressionContextAppendData(compressionContext, filedata.bytes, filedata.length) // Write the compressed file MTLIOFlushAndDestroyCompressionContext(compressionContext) -

15:05 - Create a handle to a compressed file

// Create an Metal File IO Handle // Create handle to a compressed file var compressedFileIOHandle : MTLIOFileHandle! do { try compressedFileHandle = device.makeIOHandle(url: compressedFilePath, compressionMethod: MTLIOCompressionMethod.zlib) } catch { print(error) }

-